from torchgeo.datamodules import EuroSATDataModule

from torchgeo.datasets import EuroSAT100

import fastai.vision.all as fvdata

data utils

GeoImageBlock

GeoImageBlock (chnls_first=True)

Returns a TransformBlock that creates a GeoTensorImage object from an input image tensor.

Parameters

chnls_first(bool, optional): A boolean indicating whether the input tensor haschannelsas the first dimension. Default is True.

Returns

fastai.transforms.TransformBlock: ATransformBlockobject that appliesGeoTensorImage.createon an input tensor or filename.

Create a fastai datablock with geotiffs

# get sample geotiff dataset

sat_path = fv.untar_data(EuroSAT100.url)# create a fastai datablock for geotiff classification

dblock = fv.DataBlock(blocks=(GeoImageBlock(), fv.CategoryBlock()),

get_items=fv.get_image_files,

splitter=fv.RandomSplitter(valid_pct=0.1, seed=42),

get_y=fv.parent_label,

item_tfms=fv.Resize(64),

batch_tfms=[fv.Normalize.from_stats(EuroSATDataModule.mean, EuroSATDataModule.std)],

)dblock.summary(sat_path, show_batch=True)Setting-up type transforms pipelines

Collecting items from /home/butch2/.fastai/data/EuroSAT100

Found 100 items

2 datasets of sizes 90,10

Setting up Pipeline: partial

Setting up Pipeline: parent_label -> Categorize -- {'vocab': None, 'sort': True, 'add_na': False}

Building one sample

Pipeline: partial

starting from

/home/butch2/.fastai/data/EuroSAT100/images/remote_sensing/otherDatasets/sentinel_2/tif/Industrial/Industrial_1906.tif

applying partial gives

GeoTensorImage of size 13x64x64

Pipeline: parent_label -> Categorize -- {'vocab': None, 'sort': True, 'add_na': False}

starting from

/home/butch2/.fastai/data/EuroSAT100/images/remote_sensing/otherDatasets/sentinel_2/tif/Industrial/Industrial_1906.tif

applying parent_label gives

Industrial

applying Categorize -- {'vocab': None, 'sort': True, 'add_na': False} gives

TensorCategory(4)

Final sample: (GeoTensorImage: torch.Size([13, 64, 64]), TensorCategory(4))

Collecting items from /home/butch2/.fastai/data/EuroSAT100

Found 100 items

2 datasets of sizes 90,10

Setting up Pipeline: partial

Setting up Pipeline: parent_label -> Categorize -- {'vocab': None, 'sort': True, 'add_na': False}

Setting up after_item: Pipeline: Resize -- {'size': (64, 64), 'method': 'crop', 'pad_mode': 'reflection', 'resamples': (<Resampling.BILINEAR: 2>, <Resampling.NEAREST: 0>), 'p': 1.0} -> ToTensor

Setting up before_batch: Pipeline:

Setting up after_batch: Pipeline: Normalize -- {'mean': tensor([[[[1354.4055]],

[[1118.2440]],

[[1042.9298]],

[[ 947.6262]],

[[1199.4729]],

[[1999.7909]],

[[2369.2229]],

[[2296.8262]],

[[ 732.0834]],

[[ 12.1133]],

[[1819.0103]],

[[1118.9240]],

[[2594.1409]]]]), 'std': tensor([[[[ 245.7176]],

[[ 333.0078]],

[[ 395.0925]],

[[ 593.7505]],

[[ 566.4170]],

[[ 861.1840]],

[[1086.6313]],

[[1117.9817]],

[[ 404.9198]],

[[ 4.7758]],

[[1002.5877]],

[[ 761.3032]],

[[1231.5858]]]]), 'axes': (0, 2, 3)}

Building one batch

Applying item_tfms to the first sample:

Pipeline: Resize -- {'size': (64, 64), 'method': 'crop', 'pad_mode': 'reflection', 'resamples': (<Resampling.BILINEAR: 2>, <Resampling.NEAREST: 0>), 'p': 1.0} -> ToTensor

starting from

(GeoTensorImage of size 13x64x64, TensorCategory(4))

applying Resize -- {'size': (64, 64), 'method': 'crop', 'pad_mode': 'reflection', 'resamples': (<Resampling.BILINEAR: 2>, <Resampling.NEAREST: 0>), 'p': 1.0} gives

(GeoTensorImage of size 13x64x64, TensorCategory(4))

applying ToTensor gives

(GeoTensorImage of size 13x64x64, TensorCategory(4))

Adding the next 3 samples

No before_batch transform to apply

Collating items in a batch

Applying batch_tfms to the batch built

Pipeline: Normalize -- {'mean': tensor([[[[1354.4055]],

[[1118.2440]],

[[1042.9298]],

[[ 947.6262]],

[[1199.4729]],

[[1999.7909]],

[[2369.2229]],

[[2296.8262]],

[[ 732.0834]],

[[ 12.1133]],

[[1819.0103]],

[[1118.9240]],

[[2594.1409]]]]), 'std': tensor([[[[ 245.7176]],

[[ 333.0078]],

[[ 395.0925]],

[[ 593.7505]],

[[ 566.4170]],

[[ 861.1840]],

[[1086.6313]],

[[1117.9817]],

[[ 404.9198]],

[[ 4.7758]],

[[1002.5877]],

[[ 761.3032]],

[[1231.5858]]]]), 'axes': (0, 2, 3)}

starting from

(GeoTensorImage of size 4x13x64x64, TensorCategory([4, 3, 8, 8]))

applying Normalize -- {'mean': tensor([[[[1354.4055]],

[[1118.2440]],

[[1042.9298]],

[[ 947.6262]],

[[1199.4729]],

[[1999.7909]],

[[2369.2229]],

[[2296.8262]],

[[ 732.0834]],

[[ 12.1133]],

[[1819.0103]],

[[1118.9240]],

[[2594.1409]]]]), 'std': tensor([[[[ 245.7176]],

[[ 333.0078]],

[[ 395.0925]],

[[ 593.7505]],

[[ 566.4170]],

[[ 861.1840]],

[[1086.6313]],

[[1117.9817]],

[[ 404.9198]],

[[ 4.7758]],

[[1002.5877]],

[[ 761.3032]],

[[1231.5858]]]]), 'axes': (0, 2, 3)} gives

(GeoTensorImage of size 4x13x64x64, TensorCategory([4, 3, 8, 8]))

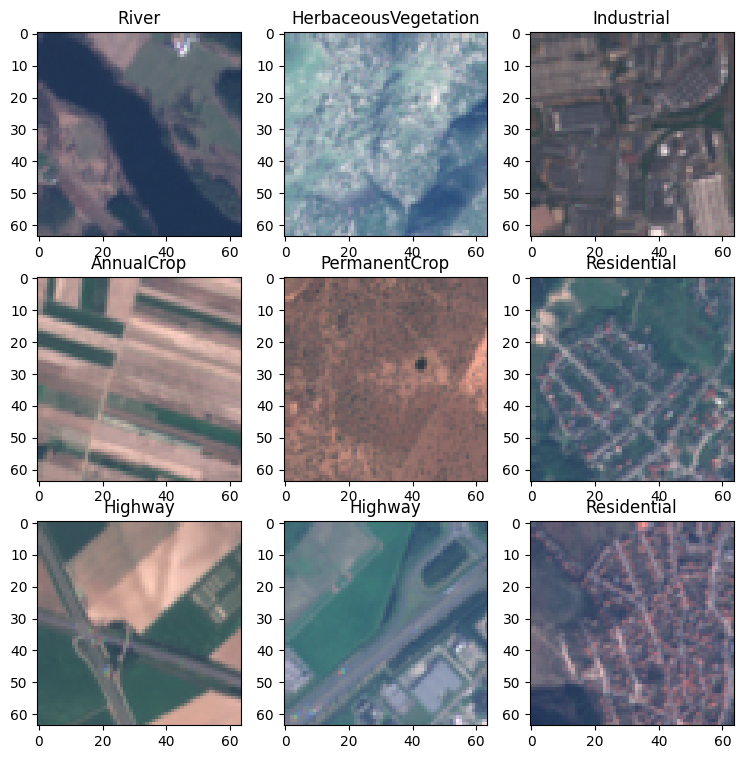

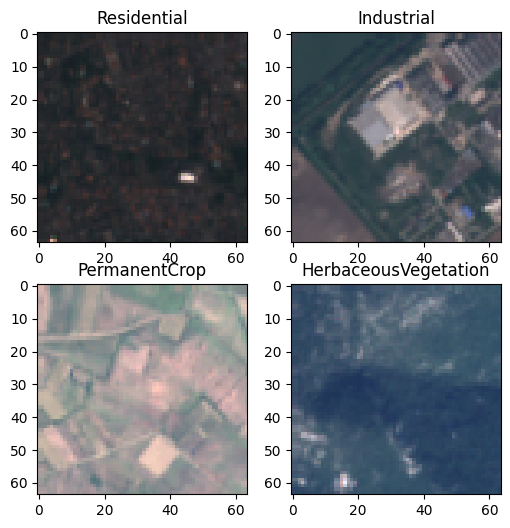

dls = dblock.dataloaders(sat_path, bs=64)dls.show_batch()