from torchgeo.datasets import EuroSAT

from torchgeo.datamodules import EuroSATDataModule

from torchgeo.models import ResNet50_Weights, resnet50

import fastai.vision.all as fv

from fastai_torchgeo.data import GeoImageBlock

from fastai_torchgeo.resnet import make_resnet_model, resnet_splitEuroSAT Land Use/Land Cover (LULC) Classification Tutorial

finetune a torchgeo pretrained resnet model on the torchgeo EuroSAT dataset for LULC

This is tutorial on finetuning a pretrained torchgeo resnet model on the torchgeo EuroSAT dataset using the fastai framework.

Note: this tutorial assumes some familiarity with the fastai deep learning package and will focus on torchgeo integration.

Installation

Install the package

pip install git+https://github.com/butchland/fastai-torchgeo.gitImport the packages and download the EuroSAT dataset

Create a fastai datablock and fastai dataloaders

batch_size=64

num_workers = fv.defaults.cpus

dset_name = 'EuroSAT'dblock = fv.DataBlock(blocks=(GeoImageBlock(), fv.CategoryBlock()),

get_items=fv.get_image_files,

splitter=fv.RandomSplitter(valid_pct=0.2, seed=42),

get_y=fv.parent_label,

item_tfms=fv.Resize(64),

batch_tfms=[fv.Normalize.from_stats(EuroSATDataModule.mean, EuroSATDataModule.std)],

)cfg = fv.fastai_cfg()

data_dir = cfg.path('data')

dset_path = data_dir/dset_namedatamodule = EuroSATDataModule(root=dset_path,batch_size=batch_size, num_workers=num_workers, download=True)datamodule.prepare_data()CPU times: user 72.8 ms, sys: 8.16 ms, total: 80.9 ms

Wall time: 80.2 msdblock.summary(dset_path, show_batch=True)Setting-up type transforms pipelines

Collecting items from /home/studio-lab-user/.fastai/data/EuroSAT

Found 27000 items

2 datasets of sizes 21600,5400

Setting up Pipeline: partial

Setting up Pipeline: parent_label -> Categorize -- {'vocab': None, 'sort': True, 'add_na': False}

Building one sample

Pipeline: partial

starting from

/home/studio-lab-user/.fastai/data/EuroSAT/ds/images/remote_sensing/otherDatasets/sentinel_2/tif/Highway/Highway_427.tif

applying partial gives

GeoTensorImage of size 13x64x64

Pipeline: parent_label -> Categorize -- {'vocab': None, 'sort': True, 'add_na': False}

starting from

/home/studio-lab-user/.fastai/data/EuroSAT/ds/images/remote_sensing/otherDatasets/sentinel_2/tif/Highway/Highway_427.tif

applying parent_label gives

Highway

applying Categorize -- {'vocab': None, 'sort': True, 'add_na': False} gives

TensorCategory(3)

Final sample: (GeoTensorImage: torch.Size([13, 64, 64]), TensorCategory(3))

Collecting items from /home/studio-lab-user/.fastai/data/EuroSAT

Found 27000 items

2 datasets of sizes 21600,5400

Setting up Pipeline: partial

Setting up Pipeline: parent_label -> Categorize -- {'vocab': None, 'sort': True, 'add_na': False}

Setting up after_item: Pipeline: Resize -- {'size': (64, 64), 'method': 'crop', 'pad_mode': 'reflection', 'resamples': (<Resampling.BILINEAR: 2>, <Resampling.NEAREST: 0>), 'p': 1.0} -> ToTensor

Setting up before_batch: Pipeline:

Setting up after_batch: Pipeline: Normalize -- {'mean': tensor([[[[1354.4055]],

[[1118.2440]],

[[1042.9298]],

[[ 947.6262]],

[[1199.4729]],

[[1999.7909]],

[[2369.2229]],

[[2296.8262]],

[[ 732.0834]],

[[ 12.1133]],

[[1819.0103]],

[[1118.9240]],

[[2594.1409]]]], device='cuda:0'), 'std': tensor([[[[ 245.7176]],

[[ 333.0078]],

[[ 395.0925]],

[[ 593.7505]],

[[ 566.4170]],

[[ 861.1840]],

[[1086.6313]],

[[1117.9817]],

[[ 404.9198]],

[[ 4.7758]],

[[1002.5877]],

[[ 761.3032]],

[[1231.5858]]]], device='cuda:0'), 'axes': (0, 2, 3)}

Building one batch

Applying item_tfms to the first sample:

Pipeline: Resize -- {'size': (64, 64), 'method': 'crop', 'pad_mode': 'reflection', 'resamples': (<Resampling.BILINEAR: 2>, <Resampling.NEAREST: 0>), 'p': 1.0} -> ToTensor

starting from

(GeoTensorImage of size 13x64x64, TensorCategory(3))

applying Resize -- {'size': (64, 64), 'method': 'crop', 'pad_mode': 'reflection', 'resamples': (<Resampling.BILINEAR: 2>, <Resampling.NEAREST: 0>), 'p': 1.0} gives

(GeoTensorImage of size 13x64x64, TensorCategory(3))

applying ToTensor gives

(GeoTensorImage of size 13x64x64, TensorCategory(3))

Adding the next 3 samples

No before_batch transform to apply

Collating items in a batch

Applying batch_tfms to the batch built

Pipeline: Normalize -- {'mean': tensor([[[[1354.4055]],

[[1118.2440]],

[[1042.9298]],

[[ 947.6262]],

[[1199.4729]],

[[1999.7909]],

[[2369.2229]],

[[2296.8262]],

[[ 732.0834]],

[[ 12.1133]],

[[1819.0103]],

[[1118.9240]],

[[2594.1409]]]], device='cuda:0'), 'std': tensor([[[[ 245.7176]],

[[ 333.0078]],

[[ 395.0925]],

[[ 593.7505]],

[[ 566.4170]],

[[ 861.1840]],

[[1086.6313]],

[[1117.9817]],

[[ 404.9198]],

[[ 4.7758]],

[[1002.5877]],

[[ 761.3032]],

[[1231.5858]]]], device='cuda:0'), 'axes': (0, 2, 3)}

starting from

(GeoTensorImage of size 4x13x64x64, TensorCategory([3, 0, 7, 1], device='cuda:0'))

applying Normalize -- {'mean': tensor([[[[1354.4055]],

[[1118.2440]],

[[1042.9298]],

[[ 947.6262]],

[[1199.4729]],

[[1999.7909]],

[[2369.2229]],

[[2296.8262]],

[[ 732.0834]],

[[ 12.1133]],

[[1819.0103]],

[[1118.9240]],

[[2594.1409]]]], device='cuda:0'), 'std': tensor([[[[ 245.7176]],

[[ 333.0078]],

[[ 395.0925]],

[[ 593.7505]],

[[ 566.4170]],

[[ 861.1840]],

[[1086.6313]],

[[1117.9817]],

[[ 404.9198]],

[[ 4.7758]],

[[1002.5877]],

[[ 761.3032]],

[[1231.5858]]]], device='cuda:0'), 'axes': (0, 2, 3)} gives

(GeoTensorImage of size 4x13x64x64, TensorCategory([3, 0, 7, 1], device='cuda:0'))

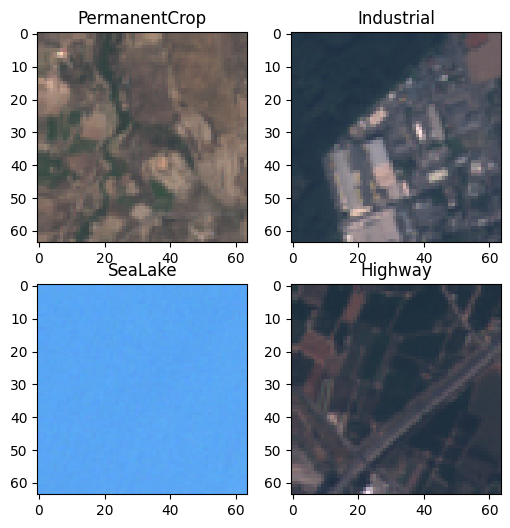

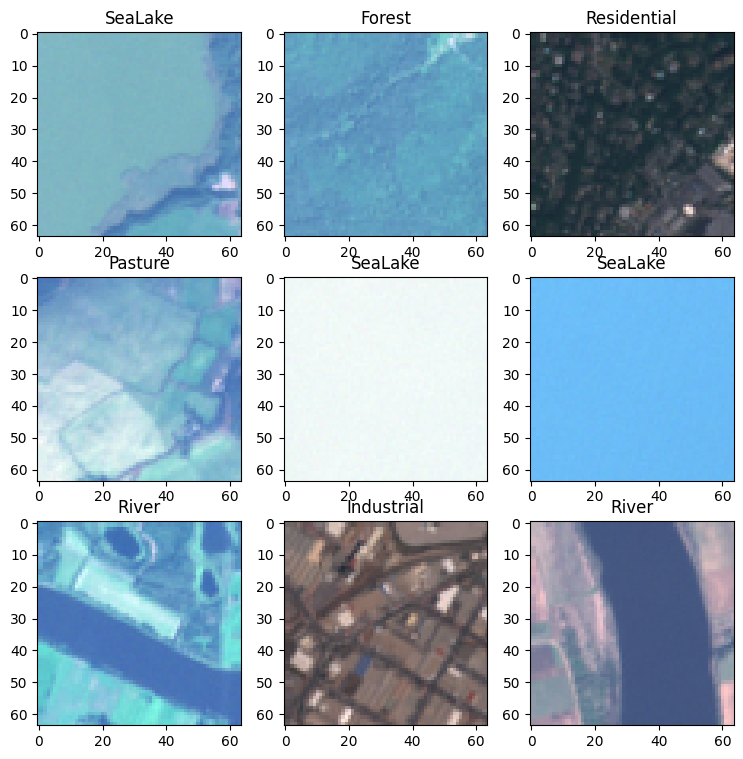

dls = dblock.dataloaders(dset_path, bs=batch_size)dls.show_batch()

Download the torchgeo pretrained resnet model and prepare a fastai compatible model

pretrained = resnet50(ResNet50_Weights.SENTINEL2_ALL_MOCO, num_classes=10) # load pretrained weightsmodel = make_resnet_model(pretrained, n_out=10)Create a fastai learner

learn = fv.Learner(

dls,

model,

loss_func=fv.CrossEntropyLossFlat(),

metrics=[fv.accuracy],

splitter=resnet_split,

)

# freeze uses parameter groups created by `resnet_split`

# to lock parameters of pretrained model except for the model head

learn.freeze()# note: only head parameter group is trainable (except BatchNorm layers w/ch are always trainable)

learn.summary()Sequential (Input shape: 64 x 13 x 64 x 64)

============================================================================

Layer (type) Output Shape Param # Trainable

============================================================================

64 x 64 x 32 x 32

Conv2d 40768 False

BatchNorm2d 128 True

ReLU

____________________________________________________________________________

64 x 64 x 16 x 16

MaxPool2d

Conv2d 4096 False

BatchNorm2d 128 True

ReLU

Conv2d 36864 False

BatchNorm2d 128 True

Identity

ReLU

Identity

____________________________________________________________________________

64 x 256 x 16 x 16

Conv2d 16384 False

BatchNorm2d 512 True

ReLU

Conv2d 16384 False

BatchNorm2d 512 True

____________________________________________________________________________

64 x 64 x 16 x 16

Conv2d 16384 False

BatchNorm2d 128 True

ReLU

Conv2d 36864 False

BatchNorm2d 128 True

Identity

ReLU

Identity

____________________________________________________________________________

64 x 256 x 16 x 16

Conv2d 16384 False

BatchNorm2d 512 True

ReLU

____________________________________________________________________________

64 x 64 x 16 x 16

Conv2d 16384 False

BatchNorm2d 128 True

ReLU

Conv2d 36864 False

BatchNorm2d 128 True

Identity

ReLU

Identity

____________________________________________________________________________

64 x 256 x 16 x 16

Conv2d 16384 False

BatchNorm2d 512 True

ReLU

____________________________________________________________________________

64 x 128 x 16 x 16

Conv2d 32768 False

BatchNorm2d 256 True

ReLU

____________________________________________________________________________

64 x 128 x 8 x 8

Conv2d 147456 False

BatchNorm2d 256 True

Identity

ReLU

Identity

____________________________________________________________________________

64 x 512 x 8 x 8

Conv2d 65536 False

BatchNorm2d 1024 True

ReLU

Conv2d 131072 False

BatchNorm2d 1024 True

____________________________________________________________________________

64 x 128 x 8 x 8

Conv2d 65536 False

BatchNorm2d 256 True

ReLU

Conv2d 147456 False

BatchNorm2d 256 True

Identity

ReLU

Identity

____________________________________________________________________________

64 x 512 x 8 x 8

Conv2d 65536 False

BatchNorm2d 1024 True

ReLU

____________________________________________________________________________

64 x 128 x 8 x 8

Conv2d 65536 False

BatchNorm2d 256 True

ReLU

Conv2d 147456 False

BatchNorm2d 256 True

Identity

ReLU

Identity

____________________________________________________________________________

64 x 512 x 8 x 8

Conv2d 65536 False

BatchNorm2d 1024 True

ReLU

____________________________________________________________________________

64 x 128 x 8 x 8

Conv2d 65536 False

BatchNorm2d 256 True

ReLU

Conv2d 147456 False

BatchNorm2d 256 True

Identity

ReLU

Identity

____________________________________________________________________________

64 x 512 x 8 x 8

Conv2d 65536 False

BatchNorm2d 1024 True

ReLU

____________________________________________________________________________

64 x 256 x 8 x 8

Conv2d 131072 False

BatchNorm2d 512 True

ReLU

____________________________________________________________________________

64 x 256 x 4 x 4

Conv2d 589824 False

BatchNorm2d 512 True

Identity

ReLU

Identity

____________________________________________________________________________

64 x 1024 x 4 x 4

Conv2d 262144 False

BatchNorm2d 2048 True

ReLU

Conv2d 524288 False

BatchNorm2d 2048 True

____________________________________________________________________________

64 x 256 x 4 x 4

Conv2d 262144 False

BatchNorm2d 512 True

ReLU

Conv2d 589824 False

BatchNorm2d 512 True

Identity

ReLU

Identity

____________________________________________________________________________

64 x 1024 x 4 x 4

Conv2d 262144 False

BatchNorm2d 2048 True

ReLU

____________________________________________________________________________

64 x 256 x 4 x 4

Conv2d 262144 False

BatchNorm2d 512 True

ReLU

Conv2d 589824 False

BatchNorm2d 512 True

Identity

ReLU

Identity

____________________________________________________________________________

64 x 1024 x 4 x 4

Conv2d 262144 False

BatchNorm2d 2048 True

ReLU

____________________________________________________________________________

64 x 256 x 4 x 4

Conv2d 262144 False

BatchNorm2d 512 True

ReLU

Conv2d 589824 False

BatchNorm2d 512 True

Identity

ReLU

Identity

____________________________________________________________________________

64 x 1024 x 4 x 4

Conv2d 262144 False

BatchNorm2d 2048 True

ReLU

____________________________________________________________________________

64 x 256 x 4 x 4

Conv2d 262144 False

BatchNorm2d 512 True

ReLU

Conv2d 589824 False

BatchNorm2d 512 True

Identity

ReLU

Identity

____________________________________________________________________________

64 x 1024 x 4 x 4

Conv2d 262144 False

BatchNorm2d 2048 True

ReLU

____________________________________________________________________________

64 x 256 x 4 x 4

Conv2d 262144 False

BatchNorm2d 512 True

ReLU

Conv2d 589824 False

BatchNorm2d 512 True

Identity

ReLU

Identity

____________________________________________________________________________

64 x 1024 x 4 x 4

Conv2d 262144 False

BatchNorm2d 2048 True

ReLU

____________________________________________________________________________

64 x 512 x 4 x 4

Conv2d 524288 False

BatchNorm2d 1024 True

ReLU

____________________________________________________________________________

64 x 512 x 2 x 2

Conv2d 2359296 False

BatchNorm2d 1024 True

Identity

ReLU

Identity

____________________________________________________________________________

64 x 2048 x 2 x 2

Conv2d 1048576 False

BatchNorm2d 4096 True

ReLU

Conv2d 2097152 False

BatchNorm2d 4096 True

____________________________________________________________________________

64 x 512 x 2 x 2

Conv2d 1048576 False

BatchNorm2d 1024 True

ReLU

Conv2d 2359296 False

BatchNorm2d 1024 True

Identity

ReLU

Identity

____________________________________________________________________________

64 x 2048 x 2 x 2

Conv2d 1048576 False

BatchNorm2d 4096 True

ReLU

____________________________________________________________________________

64 x 512 x 2 x 2

Conv2d 1048576 False

BatchNorm2d 1024 True

ReLU

Conv2d 2359296 False

BatchNorm2d 1024 True

Identity

ReLU

Identity

____________________________________________________________________________

64 x 2048 x 2 x 2

Conv2d 1048576 False

BatchNorm2d 4096 True

ReLU

____________________________________________________________________________

64 x 2048 x 1 x 1

AdaptiveAvgPool2d

AdaptiveMaxPool2d

____________________________________________________________________________

64 x 4096

Flatten

BatchNorm1d 8192 True

Dropout

____________________________________________________________________________

64 x 512

Linear 2097152 True

ReLU

BatchNorm1d 1024 True

Dropout

____________________________________________________________________________

64 x 10

Linear 5120 True

____________________________________________________________________________

Total params: 25,650,880

Total trainable params: 2,164,608

Total non-trainable params: 23,486,272

Optimizer used: <function Adam>

Loss function: FlattenedLoss of CrossEntropyLoss()

Model frozen up to parameter group #2

Callbacks:

- TrainEvalCallback

- CastToTensor

- Recorder

- ProgressCallbackTrain the model

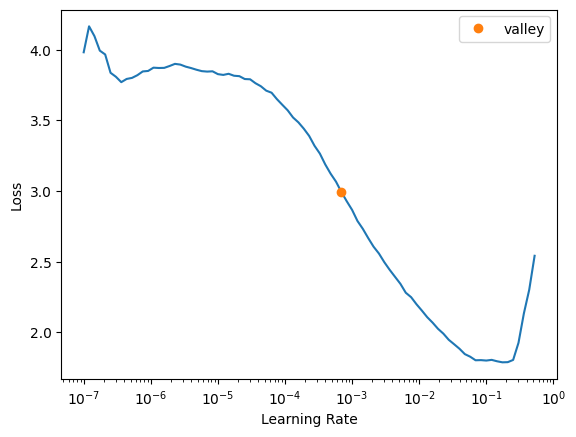

learn.lr_find()SuggestedLRs(valley=0.0006918309954926372)

from fastai.callback.tracker import SaveModelCallback

import numpy as nplearn.fine_tune(10, freeze_epochs=3,base_lr=7e-4, cbs=[SaveModelCallback(monitor='accuracy',fname='euronet-resnet50-stage1')])| epoch | train_loss | valid_loss | accuracy | time |

|---|---|---|---|---|

| 0 | 0.494235 | 0.293842 | 0.907778 | 00:41 |

| 1 | 0.333531 | 0.231448 | 0.921481 | 00:41 |

| 2 | 0.239049 | 0.184895 | 0.939444 | 00:41 |

Better model found at epoch 0 with accuracy value: 0.9077777862548828.

Better model found at epoch 1 with accuracy value: 0.9214814901351929.

Better model found at epoch 2 with accuracy value: 0.9394444227218628.

Better model found at epoch 0 with accuracy value: 0.959074079990387.

Better model found at epoch 1 with accuracy value: 0.9631481766700745.

Better model found at epoch 3 with accuracy value: 0.9688888788223267.

Better model found at epoch 4 with accuracy value: 0.9707407355308533.

Better model found at epoch 5 with accuracy value: 0.9722222089767456.

Better model found at epoch 6 with accuracy value: 0.9733333587646484.

Better model found at epoch 7 with accuracy value: 0.9740740656852722.

Better model found at epoch 9 with accuracy value: 0.9762963056564331.| epoch | train_loss | valid_loss | accuracy | time |

|---|---|---|---|---|

| 0 | 0.162829 | 0.124671 | 0.959074 | 00:43 |

| 1 | 0.088713 | 0.112167 | 0.963148 | 00:43 |

| 2 | 0.063464 | 0.126567 | 0.962037 | 00:43 |

| 3 | 0.055648 | 0.104110 | 0.968889 | 00:43 |

| 4 | 0.032861 | 0.103171 | 0.970741 | 00:45 |

| 5 | 0.021249 | 0.102579 | 0.972222 | 00:44 |

| 6 | 0.010938 | 0.102371 | 0.973333 | 00:43 |

| 7 | 0.005382 | 0.097377 | 0.974074 | 00:43 |

| 8 | 0.004003 | 0.101503 | 0.973889 | 00:44 |

| 9 | 0.002489 | 0.098339 | 0.976296 | 00:44 |

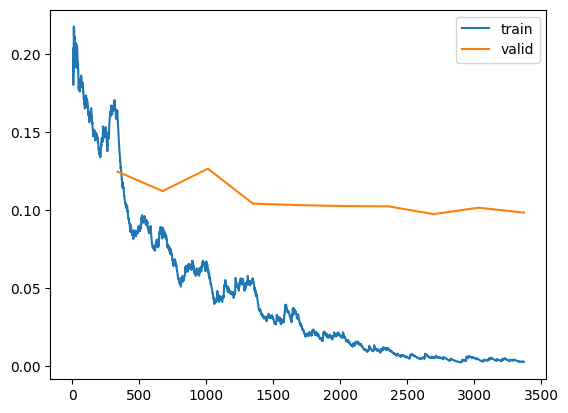

learn.save('euronet-resnet50-stage1-final')Path('models/euronet-resnet50-stage1-final.pth')learn.recorder.plot_loss()

learn.summary()Sequential (Input shape: 64 x 13 x 64 x 64)

============================================================================

Layer (type) Output Shape Param # Trainable

============================================================================

64 x 64 x 32 x 32

Conv2d 40768 True

BatchNorm2d 128 True

ReLU

____________________________________________________________________________

64 x 64 x 16 x 16

MaxPool2d

Conv2d 4096 True

BatchNorm2d 128 True

ReLU

Conv2d 36864 True

BatchNorm2d 128 True

Identity

ReLU

Identity

____________________________________________________________________________

64 x 256 x 16 x 16

Conv2d 16384 True

BatchNorm2d 512 True

ReLU

Conv2d 16384 True

BatchNorm2d 512 True

____________________________________________________________________________

64 x 64 x 16 x 16

Conv2d 16384 True

BatchNorm2d 128 True

ReLU

Conv2d 36864 True

BatchNorm2d 128 True

Identity

ReLU

Identity

____________________________________________________________________________

64 x 256 x 16 x 16

Conv2d 16384 True

BatchNorm2d 512 True

ReLU

____________________________________________________________________________

64 x 64 x 16 x 16

Conv2d 16384 True

BatchNorm2d 128 True

ReLU

Conv2d 36864 True

BatchNorm2d 128 True

Identity

ReLU

Identity

____________________________________________________________________________

64 x 256 x 16 x 16

Conv2d 16384 True

BatchNorm2d 512 True

ReLU

____________________________________________________________________________

64 x 128 x 16 x 16

Conv2d 32768 True

BatchNorm2d 256 True

ReLU

____________________________________________________________________________

64 x 128 x 8 x 8

Conv2d 147456 True

BatchNorm2d 256 True

Identity

ReLU

Identity

____________________________________________________________________________

64 x 512 x 8 x 8

Conv2d 65536 True

BatchNorm2d 1024 True

ReLU

Conv2d 131072 True

BatchNorm2d 1024 True

____________________________________________________________________________

64 x 128 x 8 x 8

Conv2d 65536 True

BatchNorm2d 256 True

ReLU

Conv2d 147456 True

BatchNorm2d 256 True

Identity

ReLU

Identity

____________________________________________________________________________

64 x 512 x 8 x 8

Conv2d 65536 True

BatchNorm2d 1024 True

ReLU

____________________________________________________________________________

64 x 128 x 8 x 8

Conv2d 65536 True

BatchNorm2d 256 True

ReLU

Conv2d 147456 True

BatchNorm2d 256 True

Identity

ReLU

Identity

____________________________________________________________________________

64 x 512 x 8 x 8

Conv2d 65536 True

BatchNorm2d 1024 True

ReLU

____________________________________________________________________________

64 x 128 x 8 x 8

Conv2d 65536 True

BatchNorm2d 256 True

ReLU

Conv2d 147456 True

BatchNorm2d 256 True

Identity

ReLU

Identity

____________________________________________________________________________

64 x 512 x 8 x 8

Conv2d 65536 True

BatchNorm2d 1024 True

ReLU

____________________________________________________________________________

64 x 256 x 8 x 8

Conv2d 131072 True

BatchNorm2d 512 True

ReLU

____________________________________________________________________________

64 x 256 x 4 x 4

Conv2d 589824 True

BatchNorm2d 512 True

Identity

ReLU

Identity

____________________________________________________________________________

64 x 1024 x 4 x 4

Conv2d 262144 True

BatchNorm2d 2048 True

ReLU

Conv2d 524288 True

BatchNorm2d 2048 True

____________________________________________________________________________

64 x 256 x 4 x 4

Conv2d 262144 True

BatchNorm2d 512 True

ReLU

Conv2d 589824 True

BatchNorm2d 512 True

Identity

ReLU

Identity

____________________________________________________________________________

64 x 1024 x 4 x 4

Conv2d 262144 True

BatchNorm2d 2048 True

ReLU

____________________________________________________________________________

64 x 256 x 4 x 4

Conv2d 262144 True

BatchNorm2d 512 True

ReLU

Conv2d 589824 True

BatchNorm2d 512 True

Identity

ReLU

Identity

____________________________________________________________________________

64 x 1024 x 4 x 4

Conv2d 262144 True

BatchNorm2d 2048 True

ReLU

____________________________________________________________________________

64 x 256 x 4 x 4

Conv2d 262144 True

BatchNorm2d 512 True

ReLU

Conv2d 589824 True

BatchNorm2d 512 True

Identity

ReLU

Identity

____________________________________________________________________________

64 x 1024 x 4 x 4

Conv2d 262144 True

BatchNorm2d 2048 True

ReLU

____________________________________________________________________________

64 x 256 x 4 x 4

Conv2d 262144 True

BatchNorm2d 512 True

ReLU

Conv2d 589824 True

BatchNorm2d 512 True

Identity

ReLU

Identity

____________________________________________________________________________

64 x 1024 x 4 x 4

Conv2d 262144 True

BatchNorm2d 2048 True

ReLU

____________________________________________________________________________

64 x 256 x 4 x 4

Conv2d 262144 True

BatchNorm2d 512 True

ReLU

Conv2d 589824 True

BatchNorm2d 512 True

Identity

ReLU

Identity

____________________________________________________________________________

64 x 1024 x 4 x 4

Conv2d 262144 True

BatchNorm2d 2048 True

ReLU

____________________________________________________________________________

64 x 512 x 4 x 4

Conv2d 524288 True

BatchNorm2d 1024 True

ReLU

____________________________________________________________________________

64 x 512 x 2 x 2

Conv2d 2359296 True

BatchNorm2d 1024 True

Identity

ReLU

Identity

____________________________________________________________________________

64 x 2048 x 2 x 2

Conv2d 1048576 True

BatchNorm2d 4096 True

ReLU

Conv2d 2097152 True

BatchNorm2d 4096 True

____________________________________________________________________________

64 x 512 x 2 x 2

Conv2d 1048576 True

BatchNorm2d 1024 True

ReLU

Conv2d 2359296 True

BatchNorm2d 1024 True

Identity

ReLU

Identity

____________________________________________________________________________

64 x 2048 x 2 x 2

Conv2d 1048576 True

BatchNorm2d 4096 True

ReLU

____________________________________________________________________________

64 x 512 x 2 x 2

Conv2d 1048576 True

BatchNorm2d 1024 True

ReLU

Conv2d 2359296 True

BatchNorm2d 1024 True

Identity

ReLU

Identity

____________________________________________________________________________

64 x 2048 x 2 x 2

Conv2d 1048576 True

BatchNorm2d 4096 True

ReLU

____________________________________________________________________________

64 x 2048 x 1 x 1

AdaptiveAvgPool2d

AdaptiveMaxPool2d

____________________________________________________________________________

64 x 4096

Flatten

BatchNorm1d 8192 True

Dropout

____________________________________________________________________________

64 x 512

Linear 2097152 True

ReLU

BatchNorm1d 1024 True

Dropout

____________________________________________________________________________

64 x 10

Linear 5120 True

____________________________________________________________________________

Total params: 25,650,880

Total trainable params: 25,650,880

Total non-trainable params: 0

Optimizer used: <function Adam>

Loss function: FlattenedLoss of CrossEntropyLoss()

Model unfrozen

Callbacks:

- TrainEvalCallback

- CastToTensor

- Recorder

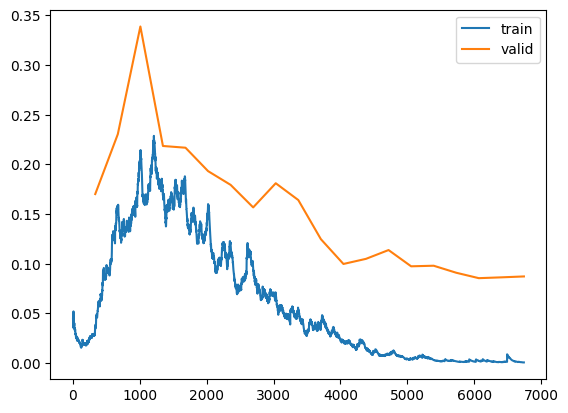

- ProgressCallbacklearn.fit_one_cycle(20, lr_max=slice(3e-3, 6e-6), cbs=[SaveModelCallback(monitor='accuracy',fname='euronet-resnet50-stage2')])| epoch | train_loss | valid_loss | accuracy | time |

|---|---|---|---|---|

| 0 | 0.035239 | 0.170115 | 0.959259 | 00:44 |

| 1 | 0.154670 | 0.230367 | 0.944815 | 00:44 |

| 2 | 0.207444 | 0.338804 | 0.908889 | 00:44 |

| 3 | 0.170779 | 0.218512 | 0.938704 | 00:44 |

| 4 | 0.176847 | 0.216765 | 0.937593 | 00:44 |

| 5 | 0.159534 | 0.193209 | 0.940926 | 00:44 |

| 6 | 0.120023 | 0.179367 | 0.944444 | 00:44 |

| 7 | 0.095654 | 0.156686 | 0.953148 | 00:44 |

| 8 | 0.069015 | 0.181001 | 0.948519 | 00:44 |

| 9 | 0.053350 | 0.164088 | 0.952222 | 00:44 |

| 10 | 0.036882 | 0.124723 | 0.964630 | 00:43 |

| 11 | 0.021793 | 0.099748 | 0.972963 | 00:43 |

| 12 | 0.014808 | 0.104900 | 0.972593 | 00:44 |

| 13 | 0.009095 | 0.113750 | 0.973148 | 00:44 |

| 14 | 0.004226 | 0.097392 | 0.976111 | 00:44 |

| 15 | 0.003125 | 0.097975 | 0.976852 | 00:44 |

| 16 | 0.001740 | 0.090906 | 0.977593 | 00:44 |

| 17 | 0.002263 | 0.085452 | 0.979444 | 00:44 |

| 18 | 0.000893 | 0.086279 | 0.980185 | 00:44 |

| 19 | 0.000644 | 0.087188 | 0.979444 | 00:44 |

Better model found at epoch 0 with accuracy value: 0.9592592716217041.

Better model found at epoch 10 with accuracy value: 0.9646296501159668.

Better model found at epoch 11 with accuracy value: 0.9729629755020142.

Better model found at epoch 13 with accuracy value: 0.9731481671333313.

Better model found at epoch 14 with accuracy value: 0.976111114025116.

Better model found at epoch 15 with accuracy value: 0.9768518805503845.

Better model found at epoch 16 with accuracy value: 0.9775925874710083.

Better model found at epoch 17 with accuracy value: 0.9794444441795349.

Better model found at epoch 18 with accuracy value: 0.9801852107048035.Post training (error analysis and setting up for inference)

learn.recorder.plot_loss()

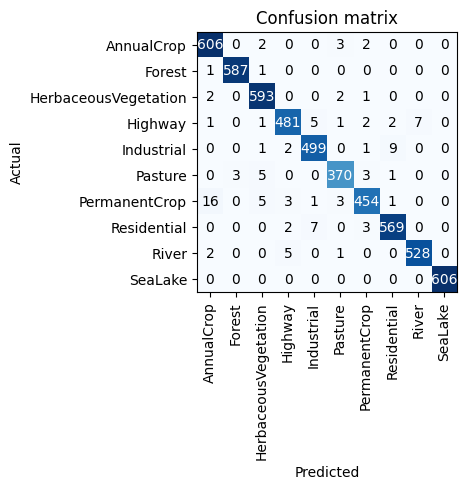

learn.save('euronet-resnet50-stage2-final')Path('models/euronet-resnet50-stage2-final.pth')learn.validate()(#2) [0.08627868443727493,0.9801852107048035]interp = fv.ClassificationInterpretation.from_learner(learn)interp.plot_confusion_matrix()

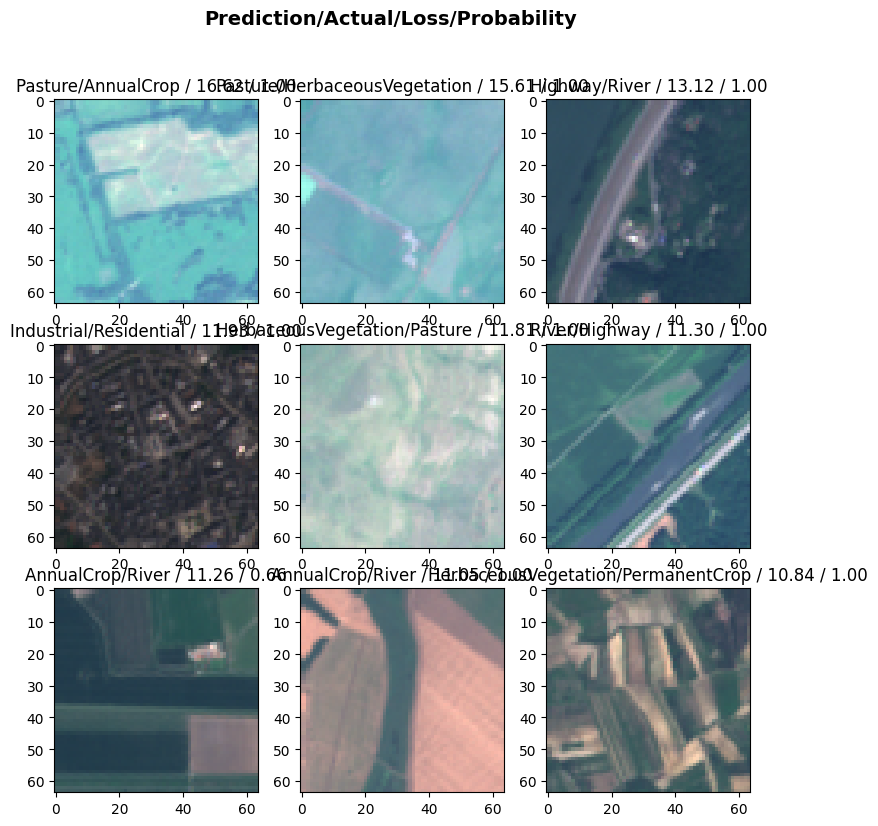

interp.plot_top_losses(9)